Automating Endpoint Updates with WUfB

Managing Windows endpoints is not core to Michelin’s business of manufacturing tires or providing service to our customers. Keeping devices secure, up to date, and current is a critical activity for sure, but it doesn’t add business value like a new customer facing application does. The more of these non core but critical tasks we automate, the more time and effort we have to focus on customer facing solutions. The less complex and more modern our environment becomes, the easier this automation becomes. The more we automate, the more we increase our patching velocity, but does this increased security come with a loss of control?

The Problem

The automation of our endpoint updates occurred almost entirely by accident. I was trying to figure out how to upgrade 53,000 machines from Windows 10 feature update 1709 to feature update 1909. I designed a process built around our internal System Center Configuration Manager (SCCM) infrastructure. We used a task sequence containing the In Place Upgrade (IPU) mechanism we had used to upgrade from Windows 7 to Windows 10. I specified the design primarily around our on premise infrastructure for the majority of our users. During testing, the upgrade process was failing approximately 20% of the time. The machines that failed were so broken, they had to be reimaged from scratch. Our target failure rate was less than 5% and those that failed were supposed to roll back to a usable state. Not only was reimaging not a user pleasing upgrade path, we did not have the time or resources to reimage 20% of the estate every time we upgraded to a new version of Windows. As much as we tweaked the task sequence to detect and remediate the different potential problems, we could not get the failure rate close to an acceptable level.

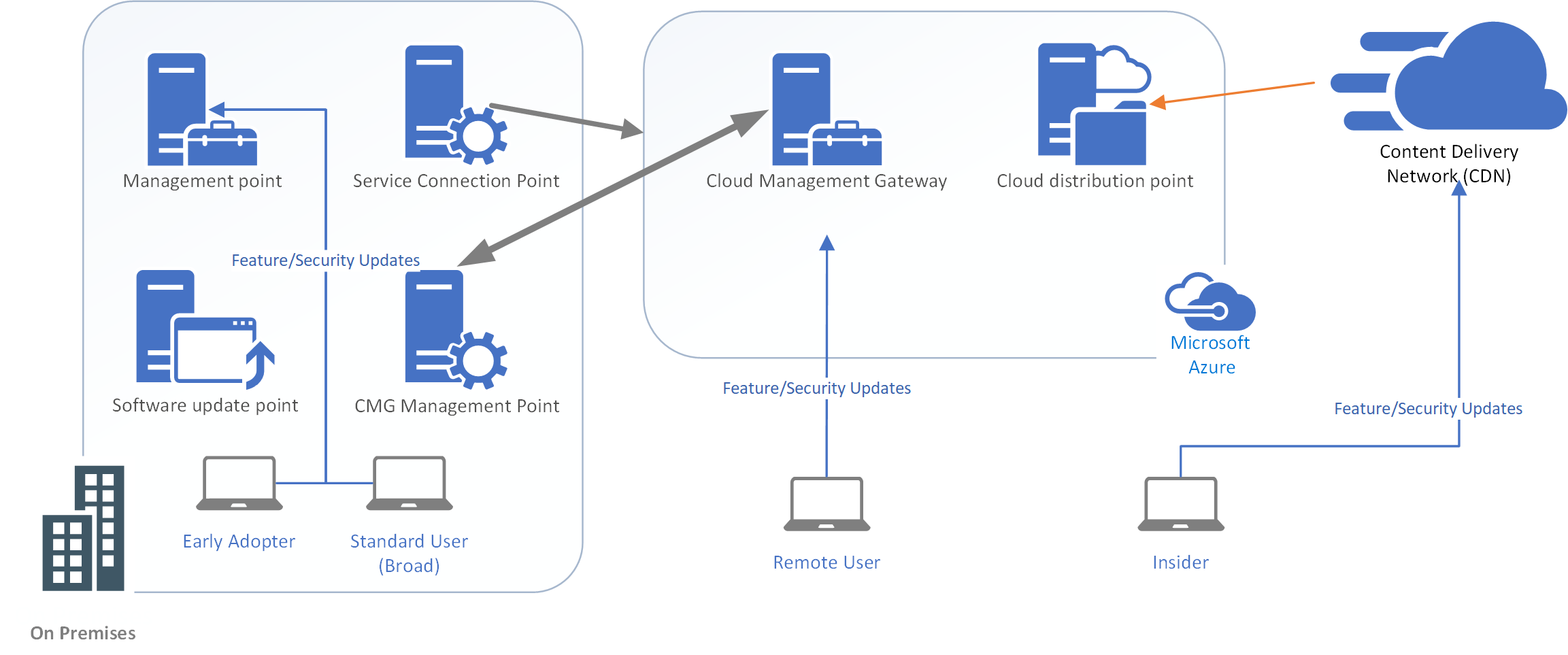

Additionally, we needed a way to update remote workers. Our plan to use our brand new Cloud Managed Gateway (CMG) wasn't working. All testers located behind Great Firewall failed to upgrade and the process returned useless generic error codes. We could not troubleshoot this issue. When the IPU process was successful, it took between 3 and 6 hours during which the user was locked out of their computer. After weeks of tries, little was working as designed and the feedback from our testers was highly negative.

The Solution

Out of desperation, I looked for other update mechanisms and new ways of approaching the problem. I decided to give Windows Update for Business (WUfB) a try. WUfB utilizes Microsoft's Windows Update service to determine what updates are available for a device. It has a built in safety hold, so that when a known incomaptible firmware, driver or device is detected, the machine will not begin the update. The Windows 10 client downloads compatible updates directly from Microsoft's Content Delivery network. Instead of targeting a group of client machines for upgrade and delivering the content through our on premise network of 137 SCCM servers, the machines would be set to detect updates and retrieve them on their own directly from Microsoft. This automated update process would occur at intervals set through Group Policy Objects and would not be subject to our normal command and control process through SCCM. We would essentially set our machines to the target version of Windows and target timing and trust that the endpoints would stay current. While this is a common approach for consumer devices, it was the opposite approach to what we typically take in the enterprise.

After a few registry tweaks, I gave it a go. WUfB upgraded my test machine perfectly on the first try. Unlike the task driven IPU process which was taking a miserable 3-6 hours to update Windows 10, my machine completed the update in 30 minutes. I reset my machine, took it home, and tried the test again remotely. The upgrade was actually faster off the corporate network. Success! We expanded our trial to include local testers and each upgrade ended in success. We had some colleagues try it in France and they loved it. Even our users behind the Great Firewall in China were successful. The new process wasn’t perfect. We still had some machines that would be put on a safety hold and some T470s with a certain bios level ended in a BSOD, but our overall error rate dropped to 2%. Our testers loved the new process. It seemed familiar to them since it looked like a standard Windows 10 home update. It was faster than any update the users had seen to date. We even had a saying “Friends don’t let friends IPU….”

The Immune Response

In a recent blog post on innovation, Scott Hughes wrote about how the corporate immune system has a tendency to kill innovative new ideas. The immune response kicked in the moment I suggested we replace our current update process using SCCM with the new WUfB solution. I started to hear the following types of statements…

“We’ll lose control if we do that… Our environment is too critical…How will we roll back? How can we trust the machines to update themselves?”

These comments implied that we had complete control over the patching of all our Windows endpoints in the estate. At first glance, this seemed to be true, but was it really? Anyone who has worked with SCCM knows that one of the biggest challenges is keeping the clients healthy. I did an analysis and when I looked closer it seemed that we had approximately 10% of the clients broken in the estate and that these clients were excluded from our existing patching reports. We didn’t really have the level of control we thought we had. While we did have the capability of rolling back a patch in SCCM, we could not roll back a feature updates like some had thought.

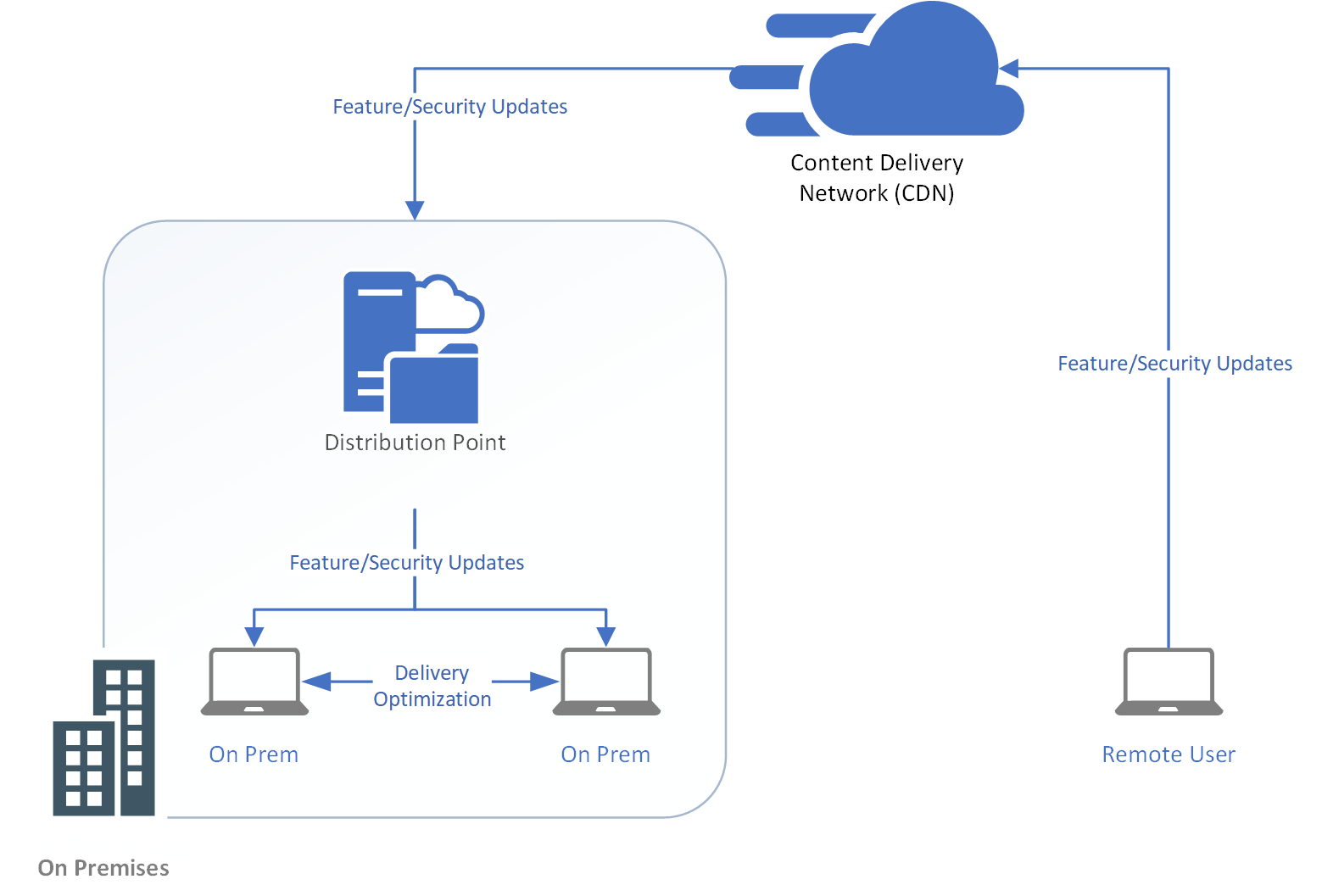

Network Implications

During the rollout a concern was raised about network bandwidth implications. If we were to use Windows Update to download the feature updates, that would be about 2GB per computer….wouldn’t that saturate our network? To address this concern, we decided to 2 types of caching technologies. The first was Microsoft Connected Cache (MCC) and second was Delivery Optimization (DO). MCC uses our existing SCCM infrastructure so that the 135+ distribution points spread around Michelin sites act as a cache servers for the clients at each local site. In addition, the peers on the network share the downloaded content with each other so that the cache servers do not get overloaded.

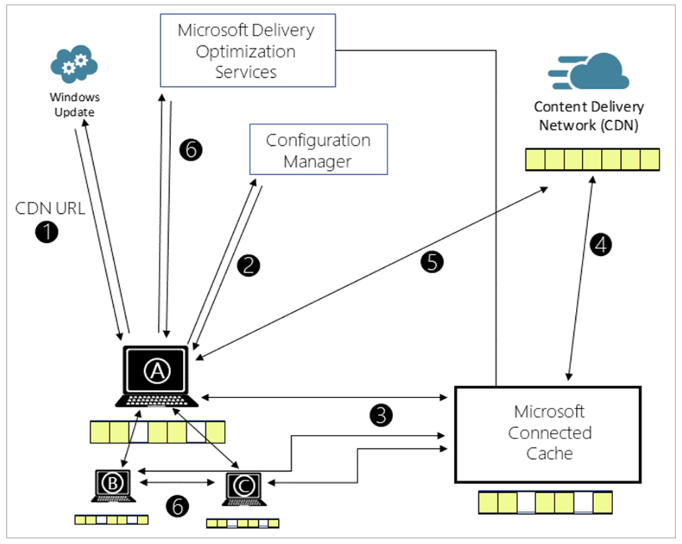

How does Microsoft Connected Cache Delivery Optimization work?

1. Client checks for updates and gets the address for the content delivery network (CDN).

2. Configuration Manager configures Delivery Optimization (DO) settings on the client, including the cache server name.

3. Client A requests content from the DO cache server.

4. If the cache doesn't include the content, then the DO cache server gets it from the CDN.

5. If the cache server fails to respond, the client downloads the content from the CDN.

6. Clients use DO to get pieces of the content from peers.

As an additional safeguard, we implemented QOS tagging to make sure that the Windows Update traffic received the lowest possible priority to prevent saturating our Internet connections and crowding out more important traffic.

What About the End User eXperience?

The final consideration was not technical but rather the upgrade experience from the user’s perspective. With SCCM, the IPU took 3 to 6 hours. The download, install and update were done in the foreground forcing the user to be locked out of their computer during the entire process. With WUfB, the download and install were done in the background meaning the user was interrupted for only 30 minutes during the reboot and application of the patch. The process mimics the normal update process users experienced at home so it felt familiar to them.

Summary

It's frustrating to fail, but sometimes failure leads to significant forward leaps of thinking. Our initial challenges forced us to rethink our current architecture and our current upgrade process. In the end we use a simpler, more reliable automated update process. We are now one a few compaines that have adopted WUfB at this scale and are looking to the next step of automating our updates.

Coming Soon

We are currently working to modernize the deployment of settings in our environment by migrating from an on premise infrastructure to cloud deployed settings. I will be documenting another step of our journey toward modern device management in my next blog posting.