Cloud Management Gateway connectivity - A case study

For my first post here I will be going through a recent case (not so much anymore but hey, that is life !) we spent time on, last Summer. Hopefully you will find it interesting.

Abstract (aka "I have no time, tell me what I need to know") : always issue PKI certificates with the certificate CN (content of the Subject field) added to the Subject Alternative Name (SAN) field, even if you have no alternative name. This will save you tons of trouble.

Cloud Management Gateway overview

The Cloud Management Gateway (CMG) is a feature of modern SCCM (now Microsoft Endpoint Configuration Manager) environments, that allows a company to extend its reach over the Internet, and address machines not connected to the corporate network by setting up a Cloud service in Azure, that roaming clients connect to, and that "bridges" over to the on premises SCCM infra. Typical use cases for the CMG include deploying patches or applications, gathering inventory data, etc. while on the Internet, basically same use cases as "traditional" SCCM but with a broader reach . Quite interesting in these troubled times, right ? Unfortunately work had only started on ours when the crisis hit, so then... we were scrambling to make it work.

Check out this link for an overview of the CMG and what you can do with it : https://docs.microsoft.com/en-us/mem/configmgr/core/clients/manage/cmg/plan-cloud-management-gateway

It should be noted this can be setup using your own internal PKI, or public certificates, and we chose to use our internal PKI at first (more on that later, read on).

The Problem and first findings

People had been at it for quite a while, and nothing was working, so I eventually got pulled in. Not knowing much about the CMG or its inner workings, I started reading (from the link above) and quickly discovered there was a built-in tester within the SCCM console to help troubleshoot the CMG. Great ! Hopefully it would be useful. Of course the results were... not that green.

It became evident very quickly there were multiple issues do deal with, and as usual with Microsoft, we had to carefully craft the list of endpoints (Fully Qualified Domain Names - FQDNs, wildcards) to open up on our proxies for this to work properly. Hint : do not forget Certificate Revocation Lists (CRLs) / Online Certificate Status Protocol (OCSP) endpoints.

- *.akamaiedge.net

- *.akamaitechnologies.com

- *.amazontrust.com

- *.cloudapp.net

- *.cnnic.cn

- *.digicert.com

- *.d-trust.net

- *.entrust.net

- *.geotrust.com

- *.globalsign.com

- *.identrust.com

- *.letsencrypt.org

- *.microsoft.com

- *.microsoftonline.com

- *.microsoftonline-p.com

- *.microsoftonline-p.net

- ...

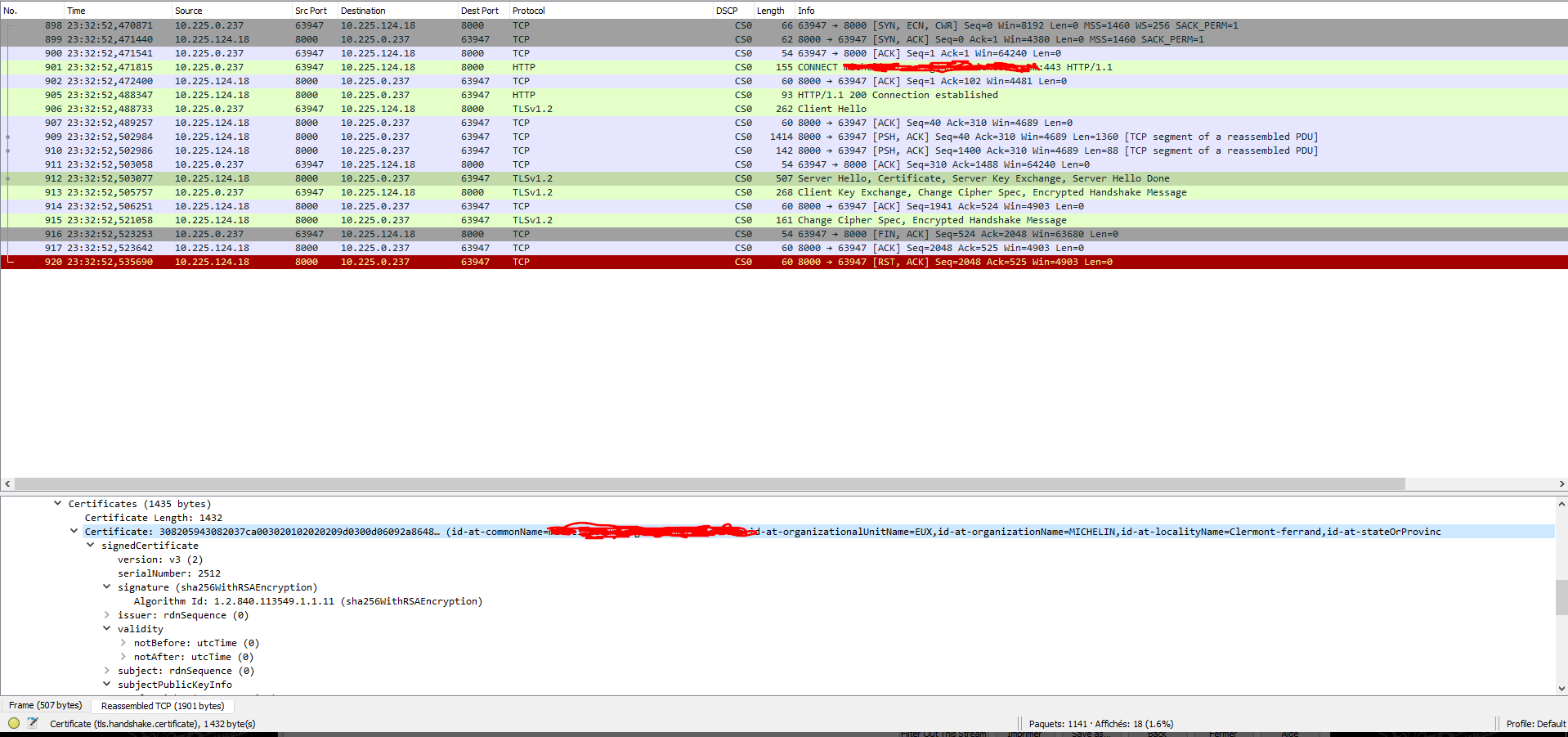

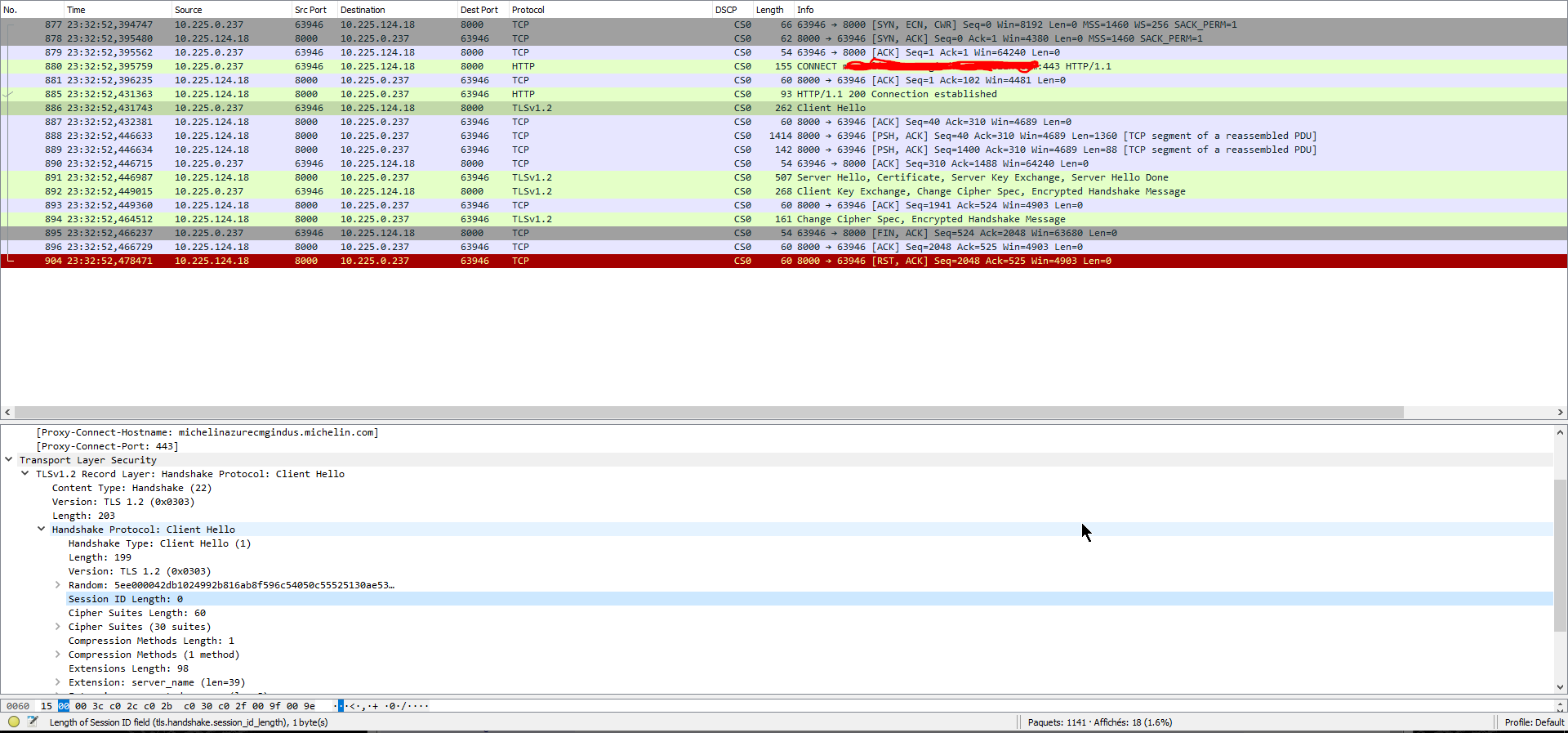

Cutting a long story short (sparing you the less interesting issues, proxy shenanigans, idle timeouts, outdated cipher sets), we found ourselves in a situation where HTTPs connections to the CMG were mysteriously failing from our on-premise SCCM Primary server. Internal SCCM logs were not that detailed, but did point towards some crypto issues. Resorting to network traces, working hand in hand with our NETwork friends, quickly taught us that something was indeed not right with the TLS handshake between our Primary servers and the CMG in Azure.

ERROR: Failed to build Http connection 51a4dd42-4559-4d46-8fb9-12ba63841fe9 with server ABCDEFGHIJKLMN.OPQRSTUVW.XYZ:443. Exception: System.Net.WebException: HTTP CONNECTION: Failed to send data to proxy server ---> System.Net.WebException: The underlying connection was closed: Could not establish trust relationship for the SSL/TLS secure channel. ---> System.Security.Authentication.AuthenticationException: The remote certificate is invalid according to the validation procedure.~~ at System.Net.S...

[70, PID:15668][06/04/2020 16:22:46] :System.Net.WebException\r\nThe underlying connection was closed: Could not establish trust relationship for the SSL/TLS secure channel.\r\n at System.Net.HttpWebRequest.GetResponse()

at Microsoft.ConfigurationManagement.AdminConsole.AzureServices.CMGAnalyzer.backgroundWorker_DoWork(Object sender, DoWorkEventArgs e)\r\nSystem.Security.Authentication.AuthenticationException\r\nThe remote certificate is invalid according to the validation procedure.\r\n at System.Net.Security.SslState.StartSendAuthResetSignal(ProtocolToken message, AsyncProtocolRequest asyncRequest, Exception exception)

In fact results were inconsistent, with some connections to the CMG seemingly OK, others succeeding in establishing the TLS handshake but with only one-way subsequent data exchanges from the SCCM server (acting as a client) to the CMG (acting as the server), and others even failing to complete the handshake altogether. Isolating the connections triggered by the Connection analyzer resulted in a very repeatable pattern, with the initial connection going through and... timing out with absolutely no data exchanges. Subsequent connections triggered by the analyzer would not even complete the TLS handshake.

Testing with different proxies, we got the same results, so clearly something was wrong on either (or both) sides, but what could it be ?

=> We thought we were maybe failing the certificate checks but the stores on the Primary SCCM server were properly setup with the Root CA and intermediate CA of the CMG certificate.

=> The CRL was accessible to the SCCM server, and nothing in the traces indicated even attempts to get it, or failure to do so.

=> The .pfx bundle that got uploaded to Azure as part of the original setup contained both Root CA and Intermediate CA as well.

Of the usefulness of simple tools...

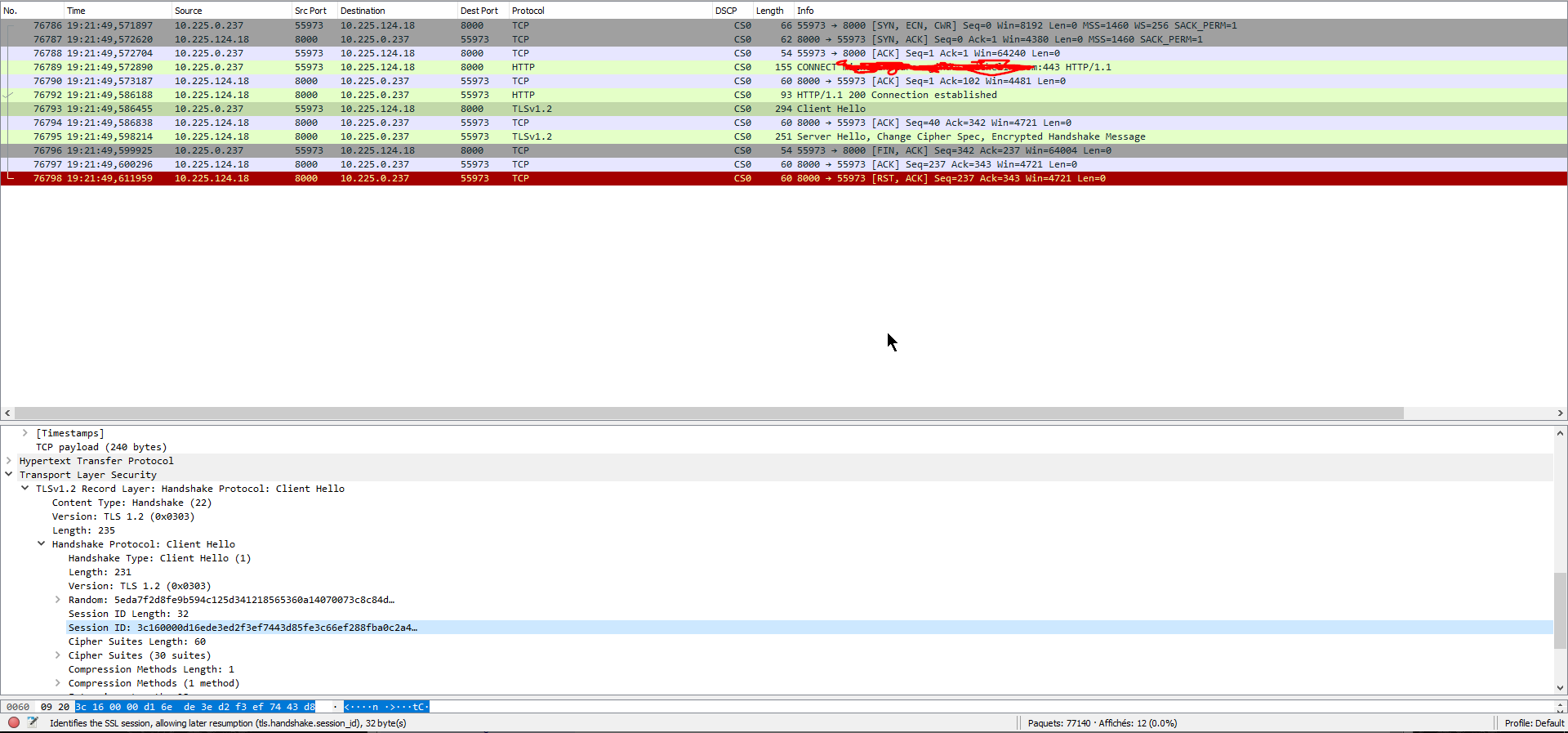

Having a closer look at the connections that never completed the handshake, I realized, they were using the TLS session resumption mechanism, and thought that maybe we were onto something. Altering the behavior of the SChannel crypto library on Windows (see client cache time) allows one to turn off session caching, and thus force the OS to negotiate "only" initial TLS sessions, no more resumption, all "Hello" messages now come up with an empty session ID.

https://docs.microsoft.com/en-us/windows-server/security/tls/tls-registry-settings

This worked in that we effectively disabled session resumption, and now had 3 full sessions being negotiated, but we still had no data exchange or improvement in tester results...

So, yes what could it possibly be ? Having worked on this on and off for days, I thought, well this thing being a Web-based service after all, what happens if I try connecting to it through a browser ?

I decided to fire up Vivaldi (pure awesomeness, try it), point it at our CMG, and all of a sudden got a nasty error message that was certificate-related.

ERR_CERT_COMMON_NAME_INVALID (-200)

Huh ? Why was that ? We did have a valid CN, representing our CMG, and some alternate names listed (including a short name) under the Subject Alternate Names (SAN) field. Should have been enough, right ? Isn't everyone looking into the CN field and the SAN to find out what the certificate was issued for ? Oh boy was I wrong !

It has not been like that in ages, and basically years ago, the use of CN got deprecated in favor of the Subject Alternate Name field if it is populated. Modern browsers do not even look at the CN anymore.

Indeed, a quick search revealed...

The ugly truth !

From RFC2818 - HTTP Over TLS, section 3.1

"If a subjectAltName extension of type dNSName is present, that MUST be used as the identity. Otherwise, the (most specific) Common Name field in the Subject field of the certificate MUST be used. Although the use of the Common Name is existing practice, it is deprecated and Certification Authorities are encouraged to use the dNSName instead."

From RFC6125 - Representation and Verification of Domain-Based Application Service Identity within Internet Public Key Infrastructure Using X.509 (PKIX) Certificates in the Context of Transport Layer Security (TLS), section 6.4.4

"As noted, a client MUST NOT seek a match for a reference identifier of CN-ID if the presented identifiers include a DNS-ID, SRV-ID, URI-ID, or any application-specific identifier types supported by the client.

Therefore, if and only if the presented identifiers do not include a DNS-ID, SRV-ID, URI-ID, or any application-specific identifier types supported by the client, then the client MAY as a last resort check for a string whose form matches that of a fully qualified DNS domain name in a Common Name field of the subject field (i.e., a CN-ID)."

So we eventually ended up reissuing the problematic certificates (Prod and Indus) with a SAN field that properly included the CMG name this time, and all of a sudden things started working.

Note : it is quite possible turning on SChannel advanced logging or CAPI2 logs would have helped, I do not remember if we did or not at some point, but for some reason we focused so much on the network aspects, this did not struck us at the time.

Moral of the story

- Break complex problems into smaller pieces that you will address one by one.

- Do not assume that things work like you THINK they should. They do not.

- Get your crypto / PKI skills up to date, everything is TLS these days.

- Network traces can only tell you so much (but they help a lot).

- Attack the problems from multiple angles (see Note above).

- Reading RFCs is TECH.