Cloud to Cloud automation with ServiceNow

Here is a short article about the recent experience of my department with automation. I will not bother you with technical details, but rather highlight key findings and points that ultimately mattered.

The use case: Office 365 room management

We decided to stop managing Office 365 rooms manually, and leverage instead the power of automation to free our internal teams from the hassle of handling them. As you can imagine, in a company as big as ours there is always somewhere, some kind of facility-related event, resulting in the need to create, make changes to, or simply remove from the system, meeting rooms. Automating such requests would allow us to reassign scarce admin time to higher value activities.

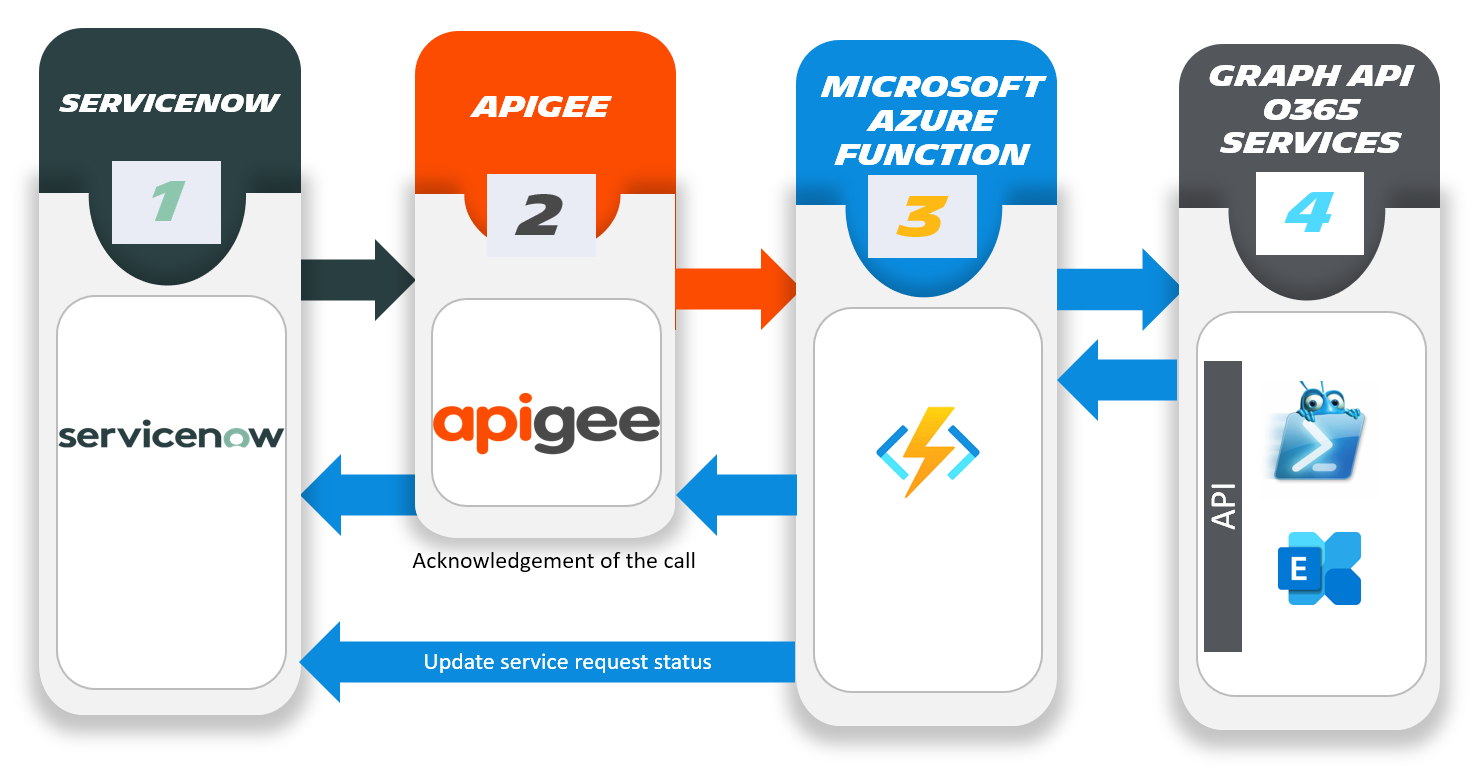

Until now, going through a MID server (Management, Instrumentation and Discovery - MID - essentially a server sitting in a DMZ and acting as a gateway between ServiceNow and on-premises environments) had been our preferred approach for ServiceNow automation. However this time, it made little sense given our target was in the Cloud. Hence the decision to go with a Cloud to Cloud automation flow, from ServiceNow to Office 365/Azure AD, with no intermediate layer of any kind. Surely it should be simple ? Here is what we learned along the path.

Be clear about your requirements... and write them down to ensure buy-in

We thought using the existing manual approach and the way WE understood room management, which after all seemed OK, as our starting point, would be fine. Guess what ? It was not.

We eventually discovered this the hard way during testing. Even for a "simple" case like that of room management, people had different expectations; those raising requests, our true clients - we realized that pretty late - and those processing them, our admins, simply were not aligned, often resulting in rework, in turns increasing code complexity and overall time spent on engineering the solution.

So we had to sit down and talk to people to understand their needs, and what rooms meant to them. In other words - communicate - to get everyone on the same page, establish what is in scope, and what is not, such as rarely encountered niche cases not worth spending time on, and generally speaking manage stakeholders (the basics for any project really). This was key here just like it is on bigger endeavors. The last thing you want is unhappy technical teams dealing with failed automated tasks and users complaining that it was "better" before. We definitely underestimated this.

Data quality matters !

Having templates for humans to raise requests to other humans and templates for humans to trigger some form of automation, is NOT the same thing.

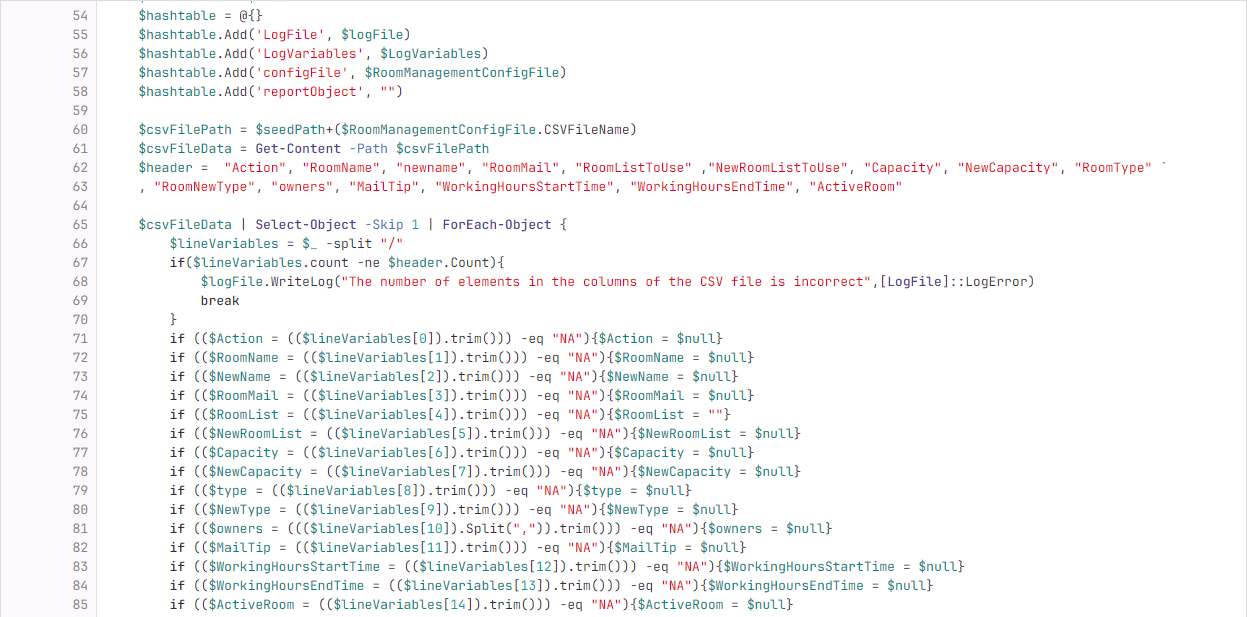

Hardly a surprise but what we quickly discovered was that we had to lock everything down, and make it so inputs would be consistently predictable for our scripts not to fail miserably. We had to revamp the 3 main forms used to issue requests (create / modify / delete) to make them as foolproof as possible (regexp, drop down boxes, integrity checks, etc.). We also had to perform some cleanup on existing objects, removing duplicates or fixing improperly formatted rooms resulting from poorly defined and/or varying specs coupled with the use of many different scripts, sometimes buggy, over time...

Plan on reusing your scripts out of the box ? Think twice !

As you understood, requests were historically processed by our admin teams through scripts (fortunately we are past clicking everywhere in the UI), consequently we naturally thought of reusing them... Except that was easier said than done.

Inputs for example, were no longer interactive or XML based, we needed to adapt to JSON payloads from ServiceNow. And where to run the scripts from ? Certainly not from our on-premises servers, like we had been doing, we needed something in the Cloud. Which led us to settle on Azure Durable functions, however these are not easily tamed and we spent quite some time figuring out how to rework our scripts to function properly in that serverless environment: custom module import, use of Kudu, etc.

Do not forget Security

With scripts now in the Cloud, and running unattended, how to deal with authentication and credential management ?

No longer do we have humans able to authenticate or handle MFA requests. So you end up using either managed identities, or applications scoped with proper rights, that you need to manage the secrets of / certificates of if you choose to go that route. Which then introduces nice things like Azure Key Vaults for you to play with. And all of a sudden what used to be a non-issue, authentication, becomes a major topic.

Test, test and test some more...

As usual it is when you think you have everything figured out and ready for Production, that testing proves you wrong.

All of a sudden use cases you did not think of and did not surface before, but are important to people, or weird interactions you never anticipated start happening, forcing you to debug, and reconsider your code. And you certainly do not want to start piling up specific conditions, that is why true understanding of what you missed, including circling back with requesters, really is needed to come up with elegant solutions, taking... time, which leads me to my next point.

"If I could save time in a bottle"

If like us you are ambitious, you will set deadlines and tell management this will be done in no time. After all, from a distance, all of this looks easy and rather straightforward. Indeed, how hard could room management be ?

Once you have pushed back deadlines for the 4th time in a row and realized you are struggling at every step along the way, you start treading more cautiously. Overconfident in our abilities, we presumed it was "only" PowerShell, and minimizing the time required to get familiar with new components, new rules, new ways of working, we got it all: timeouts, authentication issues, malformed requests, payload issues, API proxy shenanigans, undocumented use cases, etc. So plan ahead and especially if you are new to this, do not commit lightly as the learning curve IS steep. #humility

Think about your support model

Since doing automation that way was kind of new to us, we set up a specific team to kickstart the initiative. However, we decided early on that our Collaboration team, that owns the underlying O365 service (thus rooms) would also support and maintain over time the code behind all this.

This has multiple benefits, freeing up the automation team to work on other topics, empowering and upskilling the owner team, making them more familiar with the solution, and even able in time to automate other use cases by themselves. Some specific features were designed with this support model in mind, such as explicit error code outlining where in the process something failed, or training sessions focused more on debugging and troubleshooting the solution itself than on how to use it.

Conclusion

It took us months before we could move this to Production, and even then, we spent quite some time squashing bugs and addressing small issues here and there. Although it was quite the bumpy ride, compiling all these hard-learned lessons into a nice technical guide, makes us confident they will be put to good use in 2024, as we tackle our next use case: Office 365 shared mailbox management.

Hopefully, you too, will be able to benefit from our experience, as the specific use case addressed here was used only as a backdrop for rather general lessons applicable to a wide range of similar activities.

Discussing the matter with our very own Scott Hughes, he reminded me some of the above are invariants like making sure requirements are properly documented, shared and agreed upon by stakeholders for example. Having been a Project Manager for almost 15 years, I clearly should have paid more attention to this at the beginning of our journey. So, if anything, may this post serve as a good reminder that no matter how experienced you are, the basics must not be forgotten.

Super Special Thanks to Rajat Khonde and Stephane Acknin who did all the heavy lifting and made this happen while I watched and followed from a distance.